Introduction: Why Serverless Inference Matters for Modern AI Infrastructure

Serverless inference is an execution model that lets teams run AI and LLM workloads on demand without provisioning or managing servers, GPUs, or underlying infrastructure. In a serverless inference model, compute resources automatically scale up and down based on usage—ensuring low-latency responses while optimizing cost efficiency.

As organizations adopt GenAI applications, LLM-powered features, and real-time inference, serverless inference becomes essential. It eliminates infrastructure friction, accelerates model development, and delivers significant cost savings.

This guide explains how serverless inference works, its benefits, key challenges, best practices, and how Rafay enables a turnkey, enterprise-grade serverless inference platform for LLM providers, GPU cloud partners, and platform engineering teams.

What Is Serverless Inference?

Serverless inference is a cloud execution model where AI and machine learning models run on demand, automatically scaling compute resources to handle inference requests through a simple API without requiring teams to manage servers or GPU infrastructure.

Instead of hosting and maintaining dedicated GPU instances or serverless endpoints, teams access inference through a serverless platform API layer. Compute spins up as needed—then shuts down when idle.

Serverless inference provides:

- On-demand GPU provisioning

- Fully managed model execution

- Token- or usage-based billing (pay per use model)

- Automatic scaling and load distribution for concurrent requests

- Zero infrastructure management responsibility for the developer

This makes serverless inference ideal for teams delivering real-time predictions, LLM features, or production-scale AI applications.

How Serverless Inference Works

On-Demand Model Execution

Inference is triggered via simple API calls (e.g., OpenAI-compatible endpoints). Compute resources activate only when needed.

Automatic Scaling of GPU/Compute Resources

The serverless platform dynamically adjusts GPU allocation based on:

- Request volume

- Concurrency needs (including concurrent invocations)

- Model size (e.g., Llama 3.2 vs DeepSeek)

This ensures high availability without overprovisioning or wasted memory.

API-Driven Access to AI Models

Developers access ML models through a standard API interface, enabling:

- Text generation

- Embeddings

- Chat completions

- Vision inference

Rafay supports full OpenAI-compatible APIs for simplified integration and configuration.

Server-Based vs. Serverless Inference

Traditional Server-Based Inference Challenges

- Requires long-running GPU servers or dedicated servers

- High idle costs during low demand

- Significant DevOps + MLOps overhead including server provisioning and server management

- Manual scaling and capacity planning

- Requires deep infrastructure expertise

- Cold, warm, and hot path scheduling complexities

Advantages of Serverless Inference

- No servers or serverless functions to manage

- Pay only for tokens or time-based usage (pay per use model)

- Automatic scaling handles demand spikes and traffic patterns

- Zero infrastructure costs during idle time

- Predictable performance with provisioned concurrency options

- Ideal for spiky or unpredictable workloads

Key Benefits of Serverless Inference

Lower Operational Overhead

Teams no longer manage server provisioning, scaling, monitoring, or GPU lifecycles.

Elastic Scaling for AI and LLM Workloads

Automatically handles demand during:

- Product launches

- Traffic spikes

- Batch inference loads

Cost Efficiency Through Pay-Per-Use

Token-based or time-based billing eliminates waste and ensures significant cost savings.

Faster Developer Velocity

Teams focus on building AI-powered applications—not infrastructure management.

Best Practices for Serverless Inference

Optimize Models for Efficient Runtime

Quantization, pruning, distillation, and model selection (e.g., Qwen vs Llama) reduce memory size and improve inference speed.

Minimize Cold Starts

Use:

- Model warm pools with provisioned concurrency

- Pre-loaded GPUs

- Adaptive autoscaling policies tuned to traffic patterns

Plan for Throughput and Scaling

Monitor concurrency, rate limits, and request patterns to adjust autoscaling thresholds and handle more concurrent requests efficiently.

Monitor Inference Performance and Logs

Track:

- Latency

- GPU usage

- Errors

- Token consumption

- Cost per tenant

Platforms like Rafay provide built-in observability, cost visibility, and detailed documentation.

Agents vs. Serverless Inference

When to Use Agentic Systems

Agentic AI involves:

- Long-running workflows

- Multi-step decision-making

- Tool usage

- Stateful operations

Agents are best for autonomous or complex task execution.

When to Use Serverless Inference

Serverless inference is ideal for:

- Real-time predictions

- Text generation

- Embeddings

- Stateless tasks

- Burst workloads with variable traffic patterns

Why Enterprises Often Need Both

Agents handle orchestration.

Serverless inference handles model execution.

Together, they power production AI systems at scale.

Common Use Cases for Serverless Inference

- Chat & conversational AI

- Multilingual translation

- Code generation & developer assistants

- Embeddings for retrieval-augmented generation (RAG) pipelines

- Moderation & classification

- Real-time recommendation engines

- Vision + OCR tasks

- Personalized AI copilots

Rafay's serverless inference offering supports all of these through standardized APIs.

To see how this solution was built and why Rafay invested in turnkey inference capabilities, read the full introduction: Introducing Serverless Inference: Team Rafay’s Latest Innovation.

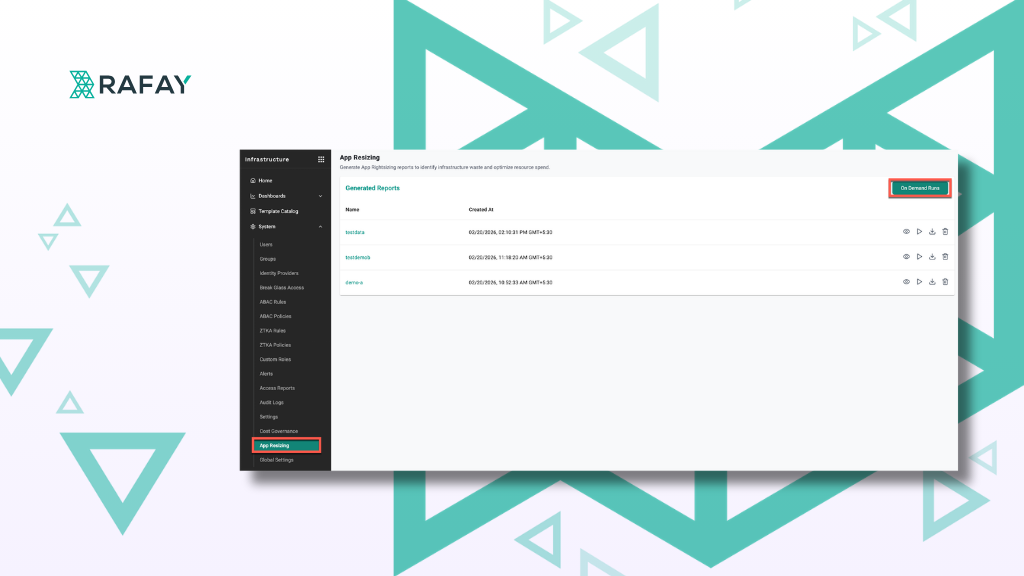

How Rafay Enables Turnkey Serverless Inference at Scale

Rafay provides a fully managed serverless inference platform designed for:

- NVIDIA Cloud Partners

- GPU Cloud Providers

- Platform engineering teams

- Enterprises delivering GenAI features

Plug-and-Play LLM Integration

Deploy LLMs like:

- Llama 3.2

- Qwen

- DeepSeek

- Mistral

…with zero code changes using OpenAI-compatible simple API calls.

Intelligent GPU Auto-Scaling

Rafay automates:

- Fleet scaling

- GPU packing

- Load distribution

- Warm pool management with provisioned concurrency

Optimizing cost and performance without manual intervention.

Token-Based Pricing & Cost Visibility

Supports token- or time-based billing with complete consumption tracking for:

- Tenants

- Projects

- Environments

- Models

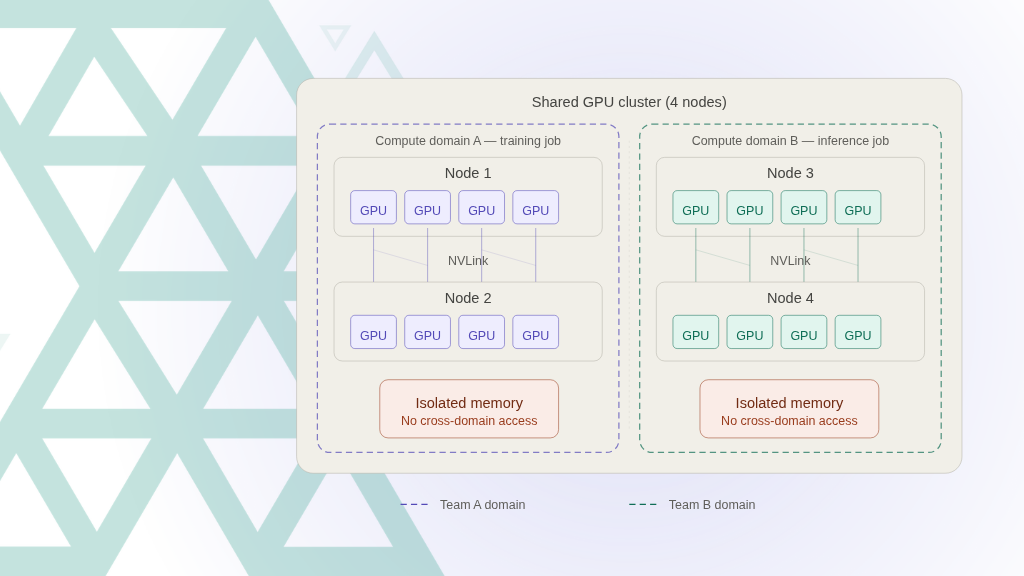

Enterprise-Grade Security and Governance

Rafay provides:

- HTTPS-only endpoints

- Bearer token authentication

- IP-level audit logging

- Token lifecycle controls

- Multi-tenant isolation

Available at No Additional Cost

Serverless inference is included for all Rafay platform customers and partners.

FAQs

What is serverless inference?

An on-demand execution model where machine learning models run without managing servers or GPUs.

How does serverless inference work?

Compute resources autoscale dynamically based on inference requests and concurrent invocations.

Serverless vs server-based inference?

Server-based requires long-running infrastructure and server provisioning. Serverless eliminates it with automatic scaling and pay-per-use pricing.

Is serverless inference good for LLMs?

Yes—especially for bursty, unpredictable, or large-scale workloads requiring low latency.

How does Rafay support serverless inference?

With autoscaling GPUs, turnkey LLM deployment, token billing, audit logs, and secure APIs.

Conclusion: Serverless Inference and the Future of AI Delivery

Serverless inference is quickly becoming the standard for delivering scalable AI and LLM applications. It offers:

- Lower costs with significant cost savings

- Faster iteration and model development

- Better performance with high availability

- Zero infrastructure overhead and simplified infrastructure management

Rafay provides a complete, turnkey serverless inference solution—enabling GPU providers, cloud platforms, and enterprises to deliver cutting-edge AI models with minimal operational burden.

Explore Rafay’s Serverless Inference Capabilities

.png)