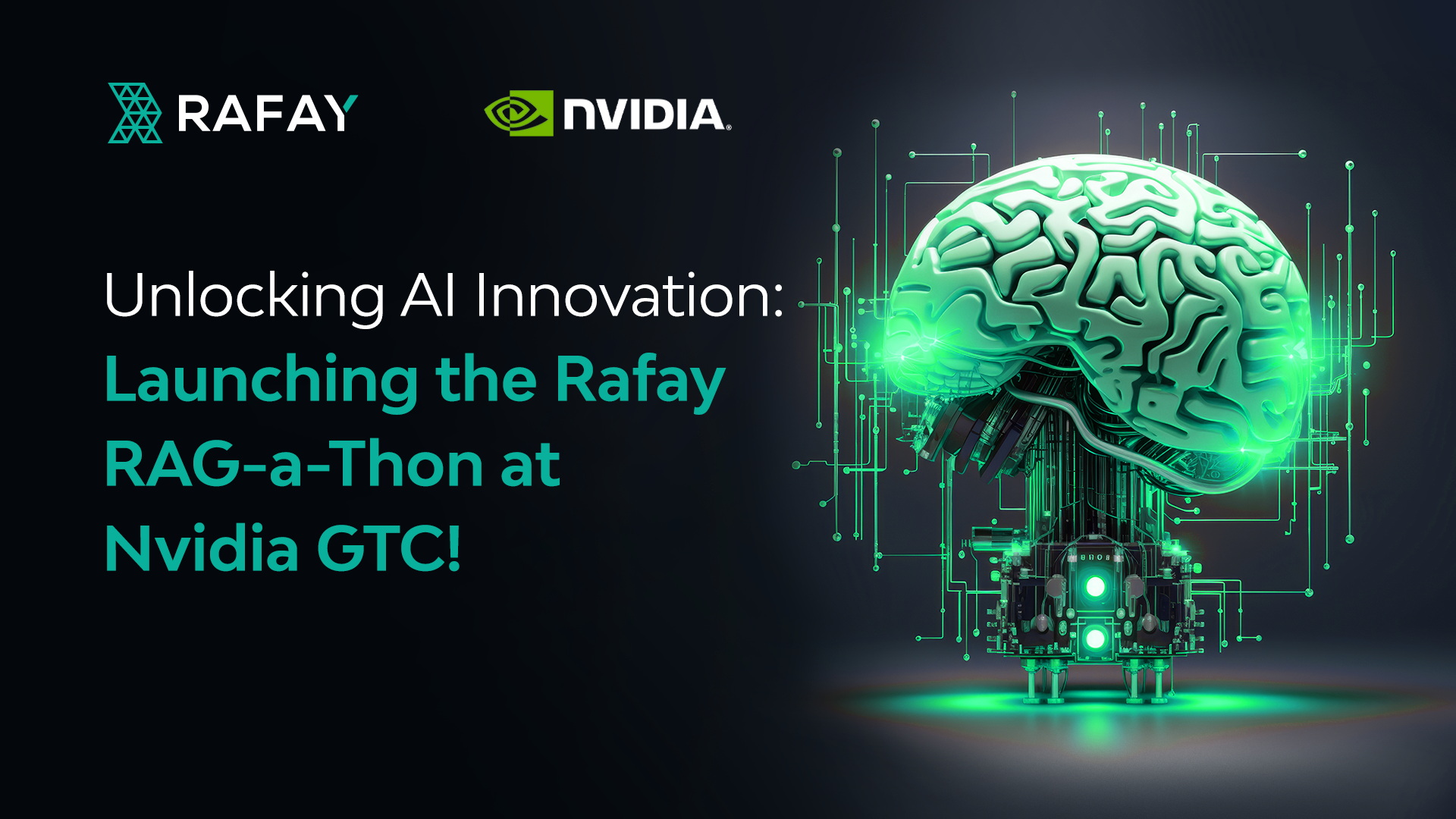

Unlocking AI Innovation: Launching the Rafay RAG-a-Thon at NVIDIA GTC!

Welcome, AI tech enthusiasts, innovators, and problem solvers! Today marks an exciting moment as we introduce the community to the work we’ve been doing here at Rafay through a RAG-focused hackathon – called a RAG-a-Thon – this week during NVIDIA’s GTC event!Rafay and NVIDIA are inviting 250 developers, data scientists, and visionaries from around the globe to join us on this exhilarating RAG-a-Thon. All the AI-enabled infrastructure you’ll need will be included including underlying accelerated computing infrastructure, NVIDIA GPUs, Vector DBs, Jupyter Notebooks, and more! So, whether you're a seasoned AI expert or a GenAI newbie, this RAG-a-Thon promises an unparalleled opportunity to harness your skills, channel your creativity, and contribute to the evolution of AI applications. Your test environment will be dynamically spun up on one of the following clouds:

- Microsoft Azure

- Google Compute Platform

- Amazon Web Services

In this post, we'll unveil the details of the RAG-a-Thon and show you how you can consume accelerated computing infrastructure in a self-service fashion in any cloud or on-prem environment using Rafay’s enterprise-grade platform-as-a-service (PaaS)!But sign up quickly, because this RAG-a-Thon is only open to the first 250 people who sign up!

Who is Rafay?

Rafay’s enterprise customers can deliver curated, controlled accelerated computing and application infrastructure to developers and data scientists with a click of a button. Developers and data scientists focus on their day jobs, and platform engineers take care of Kubernetes clusters, network configuration, policy enforcement, addons, cluster multi-tenancy, remote access, app pipelines, LLMs, vector DBs, etc., behind the scenes. All enterprises want to move fast with GenAI adoption, but they’d much rather have all the necessary controls to reduce risk while breaking new ground.

What is a RAG-a-Thon?

A RAG-a-Thon is a hackathon focused on RAG (Retrieval-Augmented Generation) use cases. A RAG application is inherently complex due to its multifaceted nature. It requires integrating various components and infrastructure to create a cohesive application. Tuning and optimizing the performance of RAG models is essential for improving their effectiveness in real-world applications. Below is a typical flow diagram for a RAG application:

What Rafay delivers for RAG & other GenAI-focused Workflows

As our CEO, Haseeb Budhani discusses in this video, developers and data scientists can access enterprise-grade infrastructure with a single click when enterprises adopt Rafay.During the RAG-a-Thon, participants will experience turnkey access to the RAG-ready workbench that will be pre-configured with the following:

- Embedding and text-generation models from Hugging Face hosted on Nvidia Triton LLM inference server

- Embedding model: intfloat/e5-large-v2

- Text-generation models: meta-llama/Llama-2-7b-chat-hf and NousResearch/Llama-2-7b-chat-hf from Hugging Face. You need a Hugging Face token with permission to download the models. Access to Llama models is gated on Hugging Face. If access is not already granted, it can be requested by following the steps outlined by Meta Model Request and Hugging Face.

- NVIDIA RAG Operator framework

- Milvus and Qdrant vector database for storing embeddings

- Jupyter Notebook with Python environment for development

- Access to Rafay's Cloud Automation Platform to provision the infrastructure, including:

- Environments in Amazon Web Service (AWS), Microsoft Azure and/or Google Cloud Platform public clouds

- 4x NVIDIA A10G GPUs

Our RAG-ready workbench is designed following the NVIDIA RAG pipeline, powered by open-source models for embeddings, text generation, and vector stores. This setup is intentionally chosen to maximize efficiency and effectiveness. Rafay's implementation adheres to NVIDIA’s best practices for RAG configurations and operations ensuring that participants have access to a cutting-edge, reliable, and high-performance environment for their projects.With Rafay’s enterprise-grade PaaS offering, developers and data scientists experience a user-friendly interface for building and deploying RAG applications. The streamlined user experience thus enhances productivity and accelerates the AI application creation, QA and deployment process.

RAG-a-Thon Goal

Participants will develop a RAG QA application using Rafay’s Cloud Automation Platform and utilize different embedding and text generation models to improve the application’s performance.This RAG-a-Thon will require you to build an answering engine for SEC 10Q filings from multiple public companies. We will evaluate the application with queries to a single document, spanning multiple documents, and data from tables.RAG-a-Thon judging will be performed by Rafay & NVIDIA and will be based on 1) the performance and 2) the production readiness of your RAG application. Model performance depends on your underlying RAG strategy, which is typically a combination of data chunking, embedding, retrieval, and text generation models. The performance will be assessed using Ragas open source library for evaluating RAG. Production readiness will be judged based on the top considerations described here, including:

- Decoupling chunks used for retrieval vs. chunks used for synthesis

- Structured Retrieval for Larger Document Sets

- Dynamically Retrieve Chunks Depending on your Task

- Optimize context embeddings

The top performing RAG application will win a Meta Quest 3 All-in-One VR Headset, (a random raffle will be held in the case of any ties).To submit your RAG application’s performance, perform the following two actions:

- Take a screenshot of your metrics and post it on both Linkedin and X using the hashtag #RafayGTCRagaThon

- Lastly, email the metrics along with the Jupyter Notebook with your implementation to ragathon@rafay.co

But hurry, this RAG-a-Thon only lasts for the week of NVIDIA GTC!

Getting Started

To serve as a quick start, participants will receive access to instructions and a sample application. You can find the SEC 10Qs that will serve as data sources here. Detailed documentation and a video will also be provided on how to use the Rafay Cloud Automation Platform. Included will be validation and assessment guidelines to assist developers in effectively evaluating their models during the RAG-a-Thon.Access to Llama models is gated on Hugging Face. If you do not have access, request access for the Llama model by following the steps from Meta Model Request and Hugging Face.Sign up for the ultimate challenge in AI innovation at our upcoming RAG-a-Thon! Once you apply by submitting the form, your credentials will be emailed to you in about 15 minutes. So, don't miss out on the opportunity to be at the forefront of AI technology – sign up now to secure your spot and shape the future of intelligent applications - only the first 250 applications will be approved!