One of Canada’s Largest Telecom Companies, TELUS, Launches a Sovereign, Developer-Ready AI Studio Powered by Rafay

Key Highlights

Sovereign by Design

TELUS’ AI Cloud is architected to ensure that all data, in storage or in transit, remains entirely within Canadian borders.

A First-Mover in North America

TELUS is the first North American telecommunications provider in the NVIDIA Cloud Partner Program delivering a fully validated AI stack that significantly reduces deployment time and cost for enterprises and startups building production-grade AI solutions.

Developer-Ready AI Studio

AI Studio provides a self-service workspace where developers and data scientists can build, fine-tune, and deploy applications using NVIDIA NIM microservices, NVIDIA NeMo toolkits, and NVIDIA AI Blueprints orchestrated through the Rafay Platform.

True Self-Service Consumption

Through rich APIs and a modern web portal, developers can provision and manage GPU-powered compute, including Kubernetes clusters and virtual machines, on demand.

Trusted by leading enterprises, neoclouds and service providers

AI Factory FAQs

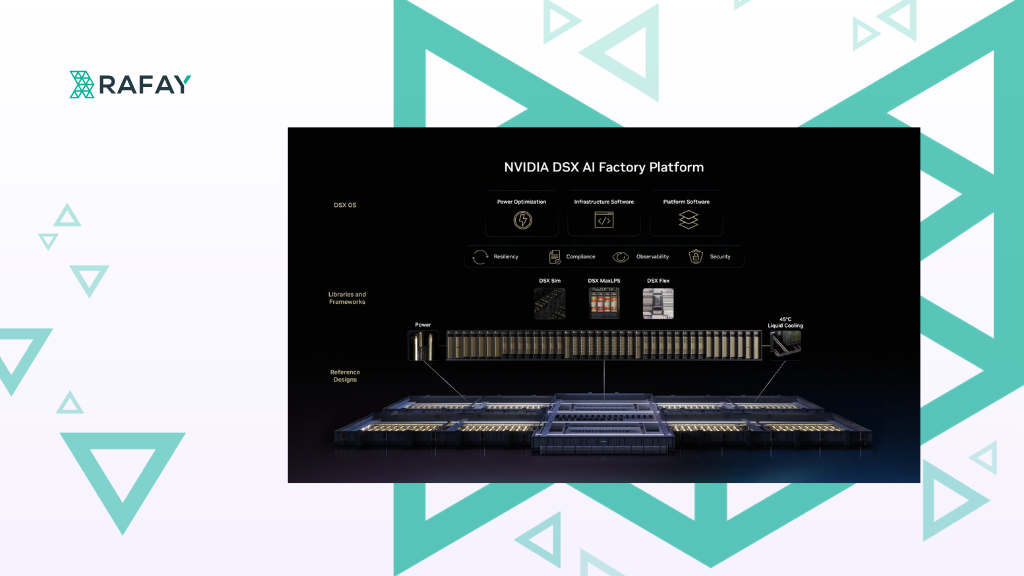

Rafay is not a GPU manufacturer or model provider. Rafay provides an infrastructure orchestration and consumption platform that enables organizations to operate AI factories by turning AI infrastructure into a governed, self-service platform. Learn more about AI factories here: https://rafay.co/ai-and-cloud-native-blog/what-is-an-ai-factory

Rafay provides the control plane for AI factories, handling orchestration, multi-tenancy, governance, and self-service access to AI infrastructure across cloud, on-prem, and sovereign environments.

AI factories are used by enterprises, cloud service providers, and sovereign AI clouds that need to scale AI workloads efficiently, maximize GPU utilization, and deliver AI as a production service rather than isolated projects. You can see how Rafay worked with Canadian telecommunications provider Telus in this case study.

A token in AI is a unit of text that a language model processes. Instead of reading full words or sentences, AI models break text into smaller pieces called tokens, which can be whole words, parts of words, punctuation, or symbols. Large language models generate responses one token at a time, and token counts determine context limits, performance, and cost.

LLM token generation works by tokenizing an input prompt, running it through a trained neural network, and predicting the next most probable token. This process repeats sequentially until the full response is produced. Each new token is influenced by the tokens that came before it, which allows models to generate coherent text.

An AI Token Factory is the operating layer that transforms GPU infrastructure into governed, consumable AI services.

Instead of exposing raw GPUs or unmanaged clusters, organizations deliver production-ready model APIs that are:

- Token-metered for transparent usage tracking

- Multi-tenant with strict isolation and RBAC

- Quota-controlled to prevent runaway spend

- Governed by policy and compliance guardrails

- Monetizable through usage-based billing

Serverless inference is how models are delivered. A Token Factory is how they are scaled, controlled, and turned into repeatable services.

Consider it a system designed to generate, process, and manage large volumes of AI model tokens at scale. It combines model serving, orchestration, and optimized inference infrastructure to efficiently convert compute resources into high-throughput token generation for production AI applications.

An inference engine is the system that runs a trained AI model to generate predictions or text in real time. In large language models, the inference engine processes input tokens and produces output tokens. Its efficiency directly impacts response speed, scalability, and cost per token.