The early era for AI was defined by experimentation, standing up isolated environments, and finding the first practical use cases. Today, the conversation is different. Enterprises are no longer asking whether AI matters. They are asking how to scale it sustainably, securely, and economically.

That shift is giving rise to the AI factory: a repeatable, governed, production-ready environment where data scientists, platform teams, and application teams can build, train, deploy, and operate AI at scale.

But as AI factories mature, a hard truth is becoming clear. The limiting factor is no longer just access to models. It is the efficient use of infrastructure.

GPUs are scarce, expensive, and often underutilized. AI workloads are dynamic and unpredictable. Platform teams are being asked to balance performance, cost, governance, and speed at the same time. In that environment, the winners will not simply be the organizations with the largest GPU clusters. They will be the ones that can operate AI infrastructure with the greatest intelligence and efficiency.

That is why the partnership between Rafay and Kubex is timely.

The Next Challenge in Enterprise AI

Enterprises have made enormous progress in operationalizing AI, but the infrastructure layer remains a growing source of friction.

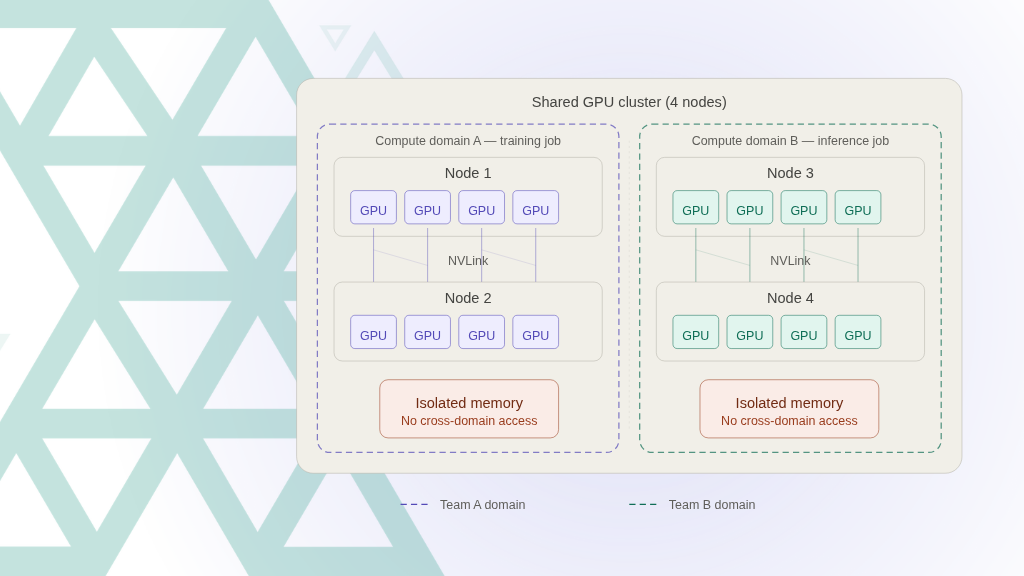

As organizations support a mix of model training, fine-tuning, batch inference, and real-time inference, infrastructure demand becomes more variable and more complex. Kubernetes clusters need to scale quickly. Resources need to be shared intelligently. Security and governance cannot be compromised. And every inefficiency carries a real financial penalty.

This is where many organizations discover the gap between building AI workloads and running an AI factory.

An AI factory is not just a collection of tools. It is an operating model. It requires a secure and scalable platform foundation. It also requires continuous optimization, the ability to adapt infrastructure in real time to shifting demand, without relying on constant manual intervention.

That is the promise behind Rafay and Kubex operating together.

From AI Infrastructure to Intelligent AI Operations

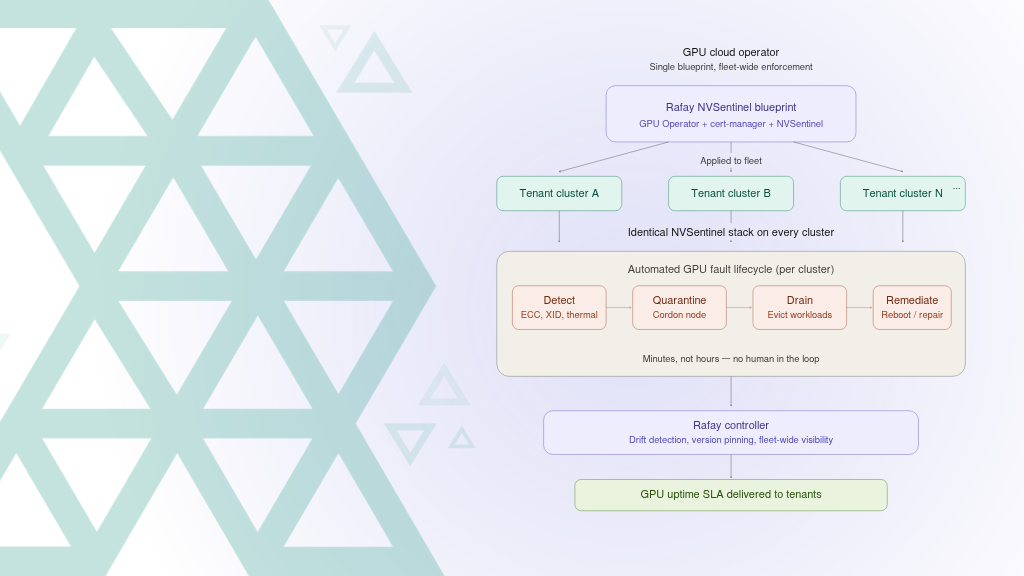

Rafay helps enterprises build and operate secure, scalable AI platforms on Kubernetes. It provides the operational backbone organizations need to bring governance, consistency, and self-service to AI infrastructure at enterprise scale.

Kubex extends that foundation with autonomous GPU optimization across your AI factory. It observes actual workload demand, infrastructure utilization, and scheduling efficiency, then acts within the boundaries you set to keep GPU and AI infrastructure running at peak efficiency without manual intervention.

Together, the partnership points toward a more advanced model for AI operations: one in which orchestration and governance are not separate from efficiency, but directly connected to it. Customers can use Rafay’s AI infrastructure orchestration and workflow management capabilities alongside Kubex’s embedded optimization to create AI factories that are not only scalable and controlled, but continuously improved for efficiency and cost.

That combination matters because enterprise AI no longer rewards static infrastructure strategies. It rewards systems that can sense, adapt, and optimize as workloads evolve.

Why Efficiency Has Become a Strategic Imperative

For many organizations, the economics of AI are now under as much scrutiny as the innovation itself.

Leaders want to accelerate AI adoption, but they also want to understand the true cost of delivering outcomes. In practical terms, that means focusing on metrics like utilization, responsiveness, and cost per token.

When GPU resources are not allocated effectively, the cost of both training and inference rises. When clusters are slow to respond to changing demand, teams lose momentum. When optimization depends on heroic manual effort, operational complexity becomes a barrier to scale.The answer is not simply to add more hardware. In many cases, the better path is to unlock more value from the infrastructure already in place.

This is exactly where the Rafay and Kubex partnership creates leverage.

With improved resource management, customers can lower the effective cost of training and inference through better utilization. Automated GPU slicing and intelligent scheduling help infrastructure align more closely with actual workload demand. And embedded, ML-driven optimization enables continuous operational improvement without requiring constant human tuning.

On top of this, predictive capabilities such as node pre-warming, bin packing, node draining, and optimized component-level scaling increase elasticity and responsiveness. These take the infrastructure from being responsive to being predictive, anticipating demands in order to have the infrastructure running before it is needed, and immediately scaling it back when loads decrease again.

The broader implication is important: infrastructure efficiency is no longer just an operational concern. It is becoming a competitive advantage.

The Rise of the Autonomous AI Factory

One of the most important ideas behind this partnership is autonomy.

For years, enterprise infrastructure has depended heavily on manual tuning, policy enforcement, and operator oversight. But AI environments move too quickly, and are too complex, for that model to scale cleanly. Mixed workloads, multi-tenant usage patterns, and rapid shifts in demand require a different approach.

The next-generation AI factory must be more autonomous. This does not mean removing governance or reducing control. It means embedding intelligence into the platform itself so that optimization happens continuously, safely, and within enterprise guardrails.

Rafay brings the governance, orchestration, and workflow management needed for enterprise AI environments. Kubex adds autonomous resource optimization that improves GPU efficiency and system responsiveness in the background. The result is a platform model where operational excellence is built in, not bolted on.For customers, this means platform teams can spend less time firefighting utilization issues and more time enabling innovation across the organization.

Built for the Realities of Enterprise AI

This partnership is especially meaningful because it reflects the realities of how enterprises actually run AI.

AI factories are rarely clean, single-purpose environments. They support different teams, different priorities, and different workload types. They must serve both experimentation and production. They must accommodate training and inference side by side. And they must do all of this inside environments where governance, security, and compliance are non-negotiable.

Rafay and Kubex are aligned to that reality.

The combined solution is built for dynamic, multi-tenant AI platforms and supports mixed training and inference workloads. It is enterprise-ready by design, fitting into Rafay-managed Kubernetes environments without disrupting the security or governance models customers already rely on. This is critical. Enterprises do not want efficiency gains that come at the expense of control. They want optimization that strengthens the platform, not complexity that fragments it.

What This Means for Customers

For customers, the value of this partnership goes beyond technical enhancement. It represents a more complete path to sustainable AI scale.Enterprises need to move faster without waiting on additional GPU capacity. They need to control costs even as demand grows. They need to reduce the operational burden on platform teams. And they need to ensure that AI infrastructure can support long-term adoption across the business.

By combining Rafay’s best-in-class orchestration and workflow management with Kubex’s automated optimization capabilities, customers can extract more value from every GPU, improve elasticity, accelerate time to value, and operate AI factories with greater confidence.

In other words, they gain not just a stronger platform, but a better operating model for AI. That means the future of enterprise AI will belong to platforms that combine three things, done together:secure orchestration, strong governance, and intelligent optimization.

Executive Perspective

“Rafay has emerged as an industry leader for Kubernetes and AI Infrastructure self-serve enablement. We’ve seen, first-hand, the benefits of Rafay’s platform in customer environments. We’re excited to give customers the ability to extend Rafay’s best in class orchestration and workflow management with Kubex’s leading automated resource optimization capability.”

— Riyaz Somani, CEO, Kubex

“AI factories require more than orchestration, they require continuous resource tuning and optimization. Rafay delivers the control plane for secure, multi-tenant AI platforms, and Kubex optimizes the infrastructure resources for those environments in real time. This partnership enables enterprises to operate AI infrastructure with both precision and efficiency at scale..”

— Haseeb Budhani, CEO, Rafay Systems

Summary

The future of enterprise AI will not be determined solely by who has the most ambitious use cases or the most advanced models. It will be determined by who can build the most efficient, scalable, and adaptive systems to support them. AI factories are becoming the new engine of innovation. To power them effectively, enterprises need more than infrastructure. They need intelligent operations. That is the opportunity Rafay and Kubex are addressing together.

The partnership signals an important direction for the industry: toward AI platforms that are not only secure and scalable, but autonomously optimized to deliver more value from every resource they consume. For enterprises navigating the next stage of AI adoption, that is not just useful. It is essential.