Telcos are investing heavily in distributed infrastructure. The land, power, and connectivity are already in place. What's been missing is the orchestration fabric that transforms those existing assets into a self-service engine for AI innovation.

Today, Rafay, a member of the NVIDIA Inception program, brings infrastructure orchestration and workload automation to AI Grid architectures, enabling telcos and service providers to transform distributed GPU environments into a governed, self-service platform. Built in close collaboration with NVIDIA and aligned to the NVIDIA AI Grid reference design, the platform abstracts operational complexity, making GPU infrastructure instantly consumable, multi-tenant, and production-ready AI factory.

Telcos Have the Infrastructure. Now They Need the Intelligence Layer

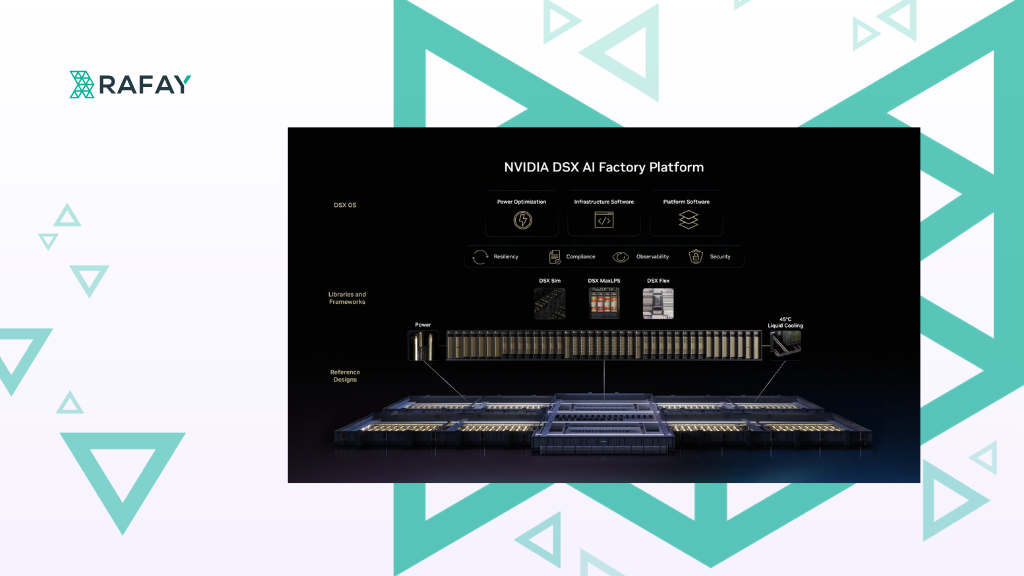

NVIDIA's AI Grid reference design gives telco operators a unified compute substrate, high-performance networking, and software stack, a common foundation for building, deploying, and orchestrating AI across distributed sites.

The mission is to create a unified intelligence layer which is workload-aware, resource-aware and KPI-aware, such that every workload is processed at the best place that optimizes what it needs - be it cost, latency or throughput within given constraints. This is where an intelligent orchestration layer that controls the workloads and resources becomes critical.

Currently, telco infrastructure is often constrained by manual workflows, fragmented tooling, and siloed governance. Platform teams wrestle with complexity while developers and data scientists wait for access to clusters, environments, and GPUs. With distributed AI grids, the challenge becomes even more complex: ensuring workloads are placed correctly and consistently across dozens, or hundreds, of distributed sites, while maintaining security, governance, and lifecycle control across a grid that spans metros, regions, and sovereign boundaries.

Developers and data scientists should be able to push a button and spin up ready-to-use environments, not file a ticket and wait. AI grids require an infrastructure orchestration and workflow automation platform that translates operator intent into reliable, policy-driven deployment automatically, delivering self-service consumption of compute and application workflows while maintaining infrastructure security, governance, and auditability end to end.

Turning Intent Into Intelligent Action

The Rafay Platform has been extended to deliver a turnkey solution to telcos that is based on the AI Grid reference design, delivering the orchestration and automation required to make distributed GPU environments secure, multi-tenant, and production-ready.

The Rafay Platform’s support for AI Grid is built around a single principle, operators should express intent, not manage individual clusters. Developers define environmental and organizational attributes and requirements for a workload, such as location, GPU class, latency zone, cost tier, security constraints. The Platform dynamically determines where and how to deploy it across the grid.

Intelligent GPU Allocation and Scheduling

The Rafay Platform captures workload intent and feeds it into a matchmaking engine that combines real-time telemetry signals with authoritative inventory. Placement decisions account for live GPU availability, site health, capacity pressure, performance indicators, and site-level constraints, enabling GPU-aware, workload-aware placement at the accelerator level across every site in the grid. This ensures latency requirements are met while optimizing overall infrastructure utilization.

Simplified Management of Distributed Clusters

Once placement is determined, the Rafay Platform coordinates a consistent rollout through a centralized control plane that spans data centers and distributed edge sites. The orchestration layer integrates with NVIDIA's reference control plane and telemetry ecosystem to ensure workloads are deployed consistently, transparently, and in alignment with defined policies regardless of where they land. Platform engineering teams can streamline the ongoing operations of Kubernetes and virtual-machine based environments across the entire grid.

Scale Compute with Confidence

Multi-tenancy controls, enterprise-grade governance, and compliance have become foundations. The Rafay Platform ensures telcos and service providers can operate infrastructure that powers secure, compliant, and scalable AI applications. Multiple enterprise tenants, business units, and AI applications can share the same physical grid with full governance and per-tenant lifecycle management, transforming GPU infrastructure into a scalable, secure, and commercially composable service layer.

Ready From Day One

From demo to production in weeks, not months. The Rafay Platform's infrastructure orchestration layer gets telcos operational fast, with built-in integrations with NVIDIA AI infrastructure. Operators can onboard onto the AI grid without the burden of building orchestration capabilities from scratch, and without scaling their operational teams proportionally as the grid grows.

Real-Time Observability Into Usage, Cost, and Resource Allocation

Operators gain fleet-wide visibility into workload placement, utilization, and policy enforcement across the entire grid. Placement decisions are transparent, auditable, and aligned with enterprise SLA, compliance, and governance requirements. Lifecycle actions such as deploy, update, rollback, and stop are supported across the full grid, with built-in guardrails for compliance and resource control that keep intent and runtime reality aligned over time.

A Natural Fit for Telco AI Grids

Telcos are uniquely suited to build and operate AI grids. They already have distributed site infrastructure, existing power and connectivity assets, and established relationships with enterprise customers who need AI services delivered close to their users and data. AI grids are RAN-ready by design, flexible enough to run AI, RAN, or both workloads on one unified platform, which means telcos don't need to choose between their existing network functions and new AI services. They can run both, on the same grid.

As access to intelligence becomes as critical as connectivity itself, telcos have a concrete opportunity to evolve from connectivity providers into AI service providers, delivering intelligence alongside network services and unlocking new recurring revenue streams built on distributed AI infrastructure.

Rafay's AI Grid Orchestration Solution is designed to accelerate that transition, by standardizing onboarding and multi-operator execution across distributed environments so that scaling the grid doesn't mean scaling operational overhead proportionally.